There was this one problem I just couldn’t tackle until now while coding my KC helper app: packets kept getting messed up. The whole design is based on opening a general-purpose socket with Python, and listening to all traffic to and from the KC game server. I based my code on a sniffer script I found on the net, tweaking it to my needs.

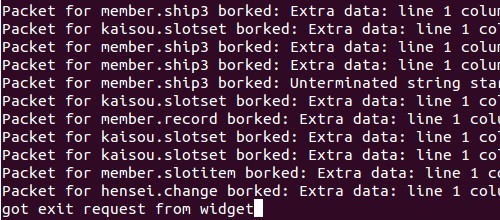

For most of the time, it worked just fine. And then, more often than I would be glad to see, it gave me errors. The JSON decoder kept telling me about malformed input, but debugging that directly would’ve been quite pointless – I can’t really fix a borked JSON string on-the-fly, especially when it comes to strings 50 thousand or so characters long (and that’s the scale I often need to deal with here).

I gave the KC coders the benefit of the doubt and assumed that the JSON the server sends is actually not malformed. Thus the only way that it can still get borked between the network transmission and my JSON decoder is if my sniffer messes up. And that it did.

After a “short” debugging phase, that involved delving into the bitwise delights of TCP/IP headers, I figured out what the problem was: long responses’ from the server came fragmented into short packets, and the packets arrived in the wrong order.

Of course I was aware that long responses get split up and that I need to reassemble them – getting a basically-sniffer code work without understanding even that is just impossible. What I didn’t realize is that the fragments can come in the wrong order. To be honest, considering how often that happened just while I was debugging, I don’t really understand how my code worked at all until now.

I fixed it by caching packets into a dictionary based on numbers I found in the TCP/IP headers and figured to be kind of the id of the packets. I must admit I still don’t really understand how the protocol headers work in action and what some of the flags do, but for now the sequence number from the TCP header and the id from the IP header seem to work.

This as a side effect also made higher-level processing of the data simpler. Until now I’d have to wait for the next request-response pair to push out the previous one, since I had no way to know when a response actually ended. Thanks to low-level concatenating of the fragments, now I can handle responses one-by-one, which is really convenient.

Of course this doesn’t guarantee that the results the higher-level code gets from the sniffer are actually correct – sadly every now and then there are still errors, and even more sadly, they tend to happen in sensitive spots. One of such is when the game starts up and fetches the database of all ships – getting a response of a “gargantuan” half a megabyte size (remember, this is plain text we’re talking about). It’s crucial for correct functioning of my app that it’s able to catch (and cache) this response at least once in its entirety. However, out of ten reloads, once there is an error.

The order of the packets isn’t the problem: there seem to be packets missing (though usually just one). My only guess was that the missing packet came in after the packet with the FIN flag – not sure if that’s supposed to happen in a HTTP-based connection, but just in case I added a function that checks whether the ids of the fragments I caught are all consecutive, and if not, the sniffer keeps waiting for the missing packet. So even if some packets arrive after the one with FIN, they still don’t get left behind (except in the extremely unlikely chance that the very first packet is missing).

But that still wasn’t enough – errors kept showing up, ergo packets kept going awol. I figured that the only way this can possibly happen is if my sniffer misses it – and the only way that can happen if a packet comes in while the program is still busy processing the previous one. So I thought I’d cut the direct processing time, and do nothing with the packet first, just put it straight into a Python internal queue for the processor function to handle. Sure, this requires spawning a separate thread, but that’s a small price to pay if it solves the problem – and so far it seems to work.